Back in 2022, I participated in the Google’s foo.bar challenge and wrote a short writeup. While I was looking through my solutions, I noticed my solution for Bunny Worker Locations could benefit from a small improvement so that it can produce same solutions a lot faster at O(1) time complexity.

Analysis of the Previous Solution

| |

Line 3: Time complexity ofO(x)asrange(), albeit from Python 3 it’s lazy sequence, when evaluated would still iterate over[1, x+1)(or[0, x]) atsum().Line 6: Time complexity ofO(y)for the similar reason as above.Line 9: Time complexity ofO(1)as it’s a simple arithmetic.

So this function runs at O(x + y) time complexity.

New Solution using Triangular Number Formula

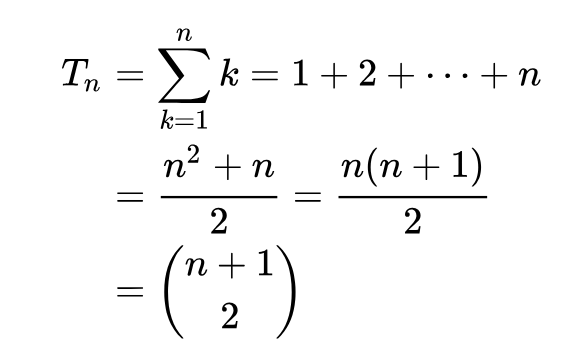

Triangular number, as Wikipedia defines it, is “the nth … is the number of dots in the triangular arrangement with n dots on each side, and is equal to the sum of the n natural numbers from 1 to n.”. So what I’m doing in line 3 and 6 can be expressed using the shortcut:

Or as code:

| |

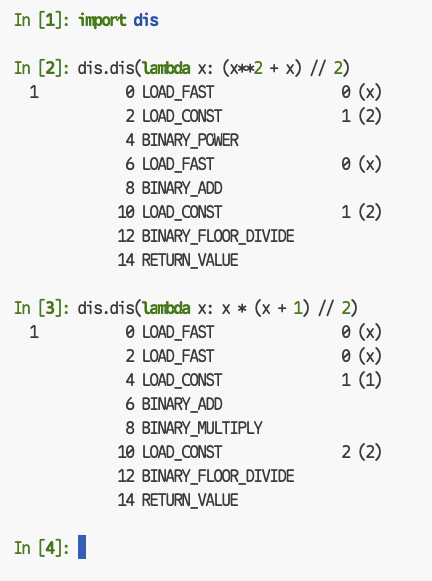

But you might be wondering why not take the distributive property and write the solution as xn = (x**2 + x) // 2? If we disassemble these two implementations, we can see both implementations have roughly similar opcodes:

But the difference is:

xn = (x * (x + 1)) // 2 : BINARY_ADD -> BINARY_MULTIPLY -> BINARY_FLOOR_DIVIDExn = (x**2 + x) // 2 : BINARY_POWER -> BINARY_ADD -> BINARY_FLOOR_DIVIDE

Where BINARY_POWER (pow()) is more expensive than BINARY_MULTIPLY, so the latter is preferred if we’re considering performance and simplicity. Now filling the remaining section for y range, the new solution becomes:

| |

Where the entire solution is a long chain of arithmetic with no function calls.

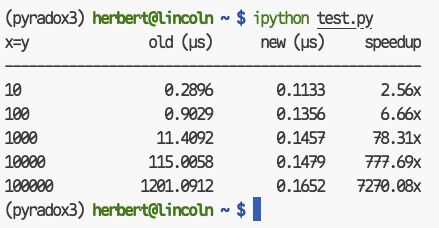

Benchmark

As (x, y) grows larger, we can see the old solution slows down whereas the new one is kept at O(1)!